aka: Why are Kubernetes finalizers making my life hard?

Are any of your pods stuck in a Terminating state? And they don’t seem to ever disappear? Or even one of your PersistentVolumeClaims just refuses to become… “unclaimed” and deleted? Did Prometheus wake you to complain about the pod not being in an acceptable state?

The Quick Fix

Is there a finalizer?

First thing I’d suggest is to check if your resource has a finalizer. If it does have one, then that’s probably why it’s stuck…

❗️Kubernetes uses American spelling, thus why you’ll keep seeing me – a Melbourne, Australia based person – using a mix of “finalizers” as well as the more orthographically correct spelling of “finaliser”.

Such is life.

Here’s a teeny tiny example of what a finalizer looks like… Here’s my pod spec:

# failing-pod.yml

apiVersion: v1

kind: Pod

metadata:

name: demo-pod

namespace: demo-finalizers

finalizers:

- demo.finalizer.srcinnovations.com.au/failing

spec:

containers:

- name: nginx

image: nginxIt’s just a simple nginx pod, but notably, it has the finalizer called demo.finalizer.srcinnovations.com.au/failing.

(Yes, I did go and write a demo finalizer for this blog post so that I can get better screenshots for it… Ain’t I nice? 😇)

(All this demo finaliser does is not finalise a pod’s deletion. )

$ kubectl apply -f ./failing-pod.yml

pod/demo-pod created

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

demo-pod 1/1 Running 0 79sNow the demo-pod is running. If I were to kubectl describe pod it, I’d see no signs that there is a finalizer waiting to ambush me. However, if I were to get it’s full details in yaml format like so…

$ kubectl get pod demo-pod -o yaml

apiVersion: v1

kind: Pod

metadata:

annotations:

<snip>

creationTimestamp: "2024-08-29T14:01:04Z"

finalizers:

- demo.finalizer.srcinnovations.com.au/failing

name: demo-pod

<snip>

spec:

containers:

- image: nginx

<snip>

status:

conditions:

<snip>… you can see that the finalizers array exists (line 9 and 10), and that there is a finalizer there.

Now I attempt to delete the pod…

$ kubectl delete pod demo-pod

pod "demo-pod" deleted

### And now I keep waiting...

### ...and my demo finalizer refuses to finalise the pod...

### ...and waiting...

### ...and the demo finalizer STILL refuses to finalise the pod...

### ...and waiting...

### ...and I give up...

### ...and I press ctrl-z to quit

$ Get the pods again, and this time it says…

$ kubectl getl pods

NAME READY STATUS RESTARTS AGE

demo-pod 0/1 Terminating 0 23mMy custom finalizer controller is refusing to “finalise” the deletion. Thus the pod is stuck in Terminating state. And no matter how long you wait, it does nothing. Even doing a --force makes no difference.

~ kubectl delete pod demo-pod --force

Warning: Immediate deletion does not wait for confirmation that the running resource has been terminated. The resource may continue to run on the cluster indefinitely.

pod "demo-pod" force deleted(Which is actually implied in the “Warning”.)

Since the finalizer is the problem… And since you’re trying to delete the resource anyway… The solution is simple…

Delete the finalizer! 😅

Easiest way to do this is patch the change in with a strategic merge:

$ kubectl patch pod demo-pod -p '{"metadata":{"finalizers":[]}}' --type=mergeThis patch will take the current finalizers array and replace it with an empty array.

Once completed, if you were to check your pods again…

$ kubectl get pods

No resources found in demo-finalizers namespace.It’s been deleted!

Read on for some relevant implications of having manually deleted the finalizer like that…

Let’s Get Some Details

About Finalizers

The Kubernetes documentation has things to say about Finalisers – and it’s informative reading (albeit a little dry).

Fundamentally, the existence of things in the .metadata.finalizers array means that a resource being marked for deletion will not be deleted until after all of the finalizers have been cleared from the array.

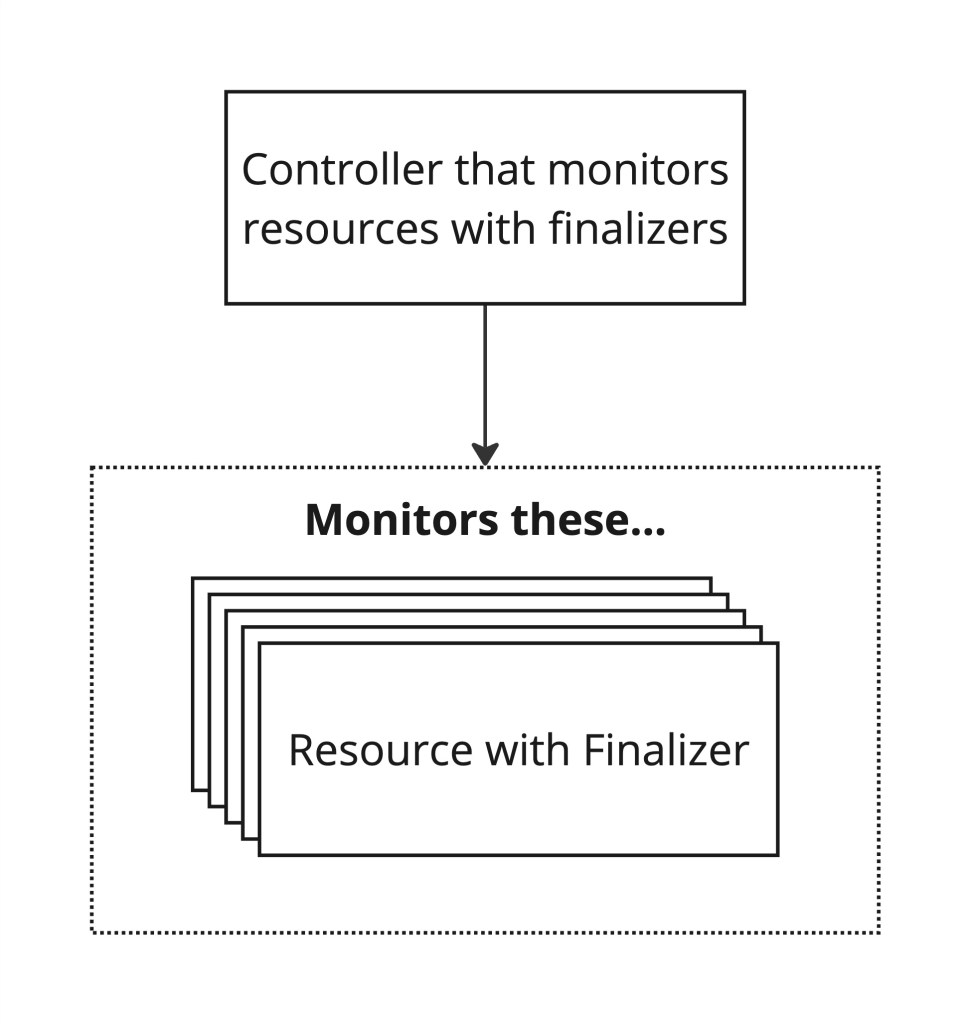

This also implies that something else is responsible for managing these items in the .metadata.finalizers array.

These finalizers are often monitored and managed by custom controllers. When a watched resource that has been marked for deletion includes a finalizer string that the finalizer controller cares about, then the controller jumps into action to execute some final cleanup tasks before allowing the resource to be deleted.

That also means that if a finaliser did not complete – leaving you with a stuck resource – then there is a possibility that something else was not done. Removing the finaliser might have removed the resource from your cluster, but it does not mean that the appropriate cleanup tasks have been completed. You MAY need to manually remediate what the finalizer was intended to do.

For example, if it’s a PersistentVolumeClaim that you were trying to delete, then the finaliser was – probably – trying to delete the underlying infrastructure’s dynamically provisioned volume. If the persistent volume controller failed to delete the underlying piece of storage, then the finaliser won’t be removed. Forcing the resource to be removed then, might actually mean that the reference to it from within Kubernetes is gone, but the actual cloud storage system is still there – and costing you money!

It’s not always straightforward to know when a finaliser exists. In some use cases the .metadata.finalizers field is actually added via a Mutating Admission Webhook after you have done a kubectl apply without your knowledge!

When Might a Finalizer be Used in a Custom Controller?

These controllers can be used for multiple things, for example as part of Day 2 Operators that are helping manage a custom resource.

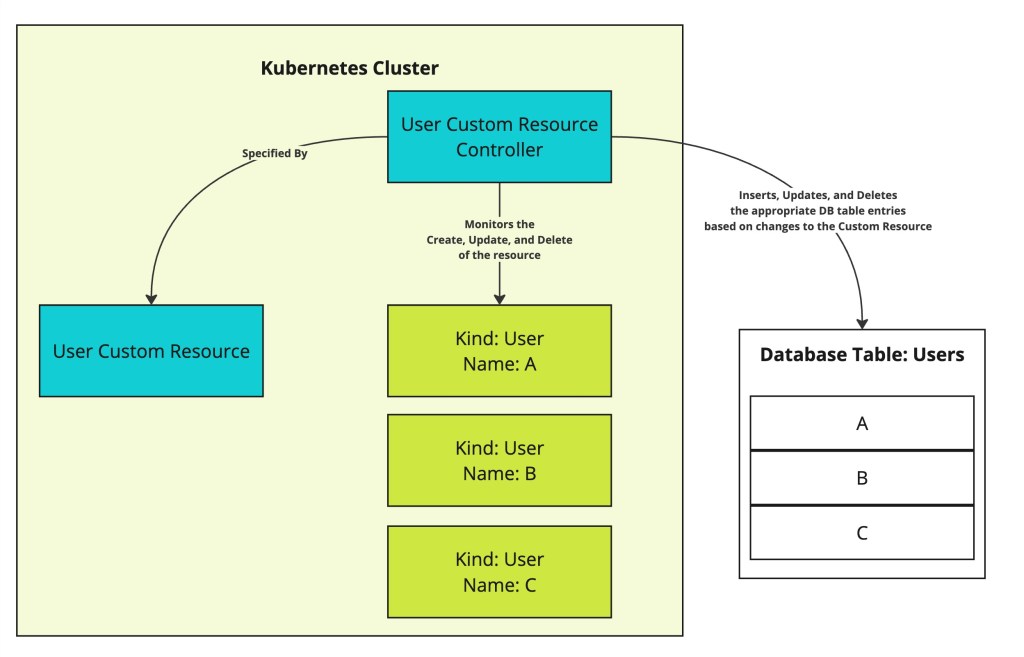

Let’s say you need a declarative configuration based approach to Create, Update, and Delete users from within a Kubernetes cluster, and have it perform the appropriate User actions to your user store in a Users database…

You’d have a User Custom Resource, and a User Custom Resource Controller to manage it.

As new users are added or existing users are modified, the User manifest is updated, and the user references in the database are inserted or updated as appropriate.

However, when the User has been deleted…. Then you do not wish to delete the resource manifest until after the controller has actually deleted the user from the database.

This is where a finaliser makes perfect sense. The resource is marked for deletion, the controller realises and goes and deletes the user entry from the database table. Once successfully deleted, then the controller will delete the finaliser item from the spec, and then the kube controller can actually delete the User resource.

Under a scenario where the user controller is unable to successfully delete the user from the database table (e.g. failed database connection)… Then the LAST thing that you want is for the User resource to disappear from the cluster – implying that the actual user has been removed, but in reality, the user controller was unable to actually do so.

You’d want to actually remediate that dangling User entry in the database before you delete the referencing User resource.

Want More?

Kubernetes is a fun, entertaining, and very clever piece of kit which is also – unfortunately – very complicated. These finalizers are a perfect example of a trip line for those who are not across the minute details of Kubernetes, and I hope that this article has given you a quick and easy solution to resolve resources that are stuck in a Terminating state. It may have also given you even more insight into finalizers, why they exist, and how they may be used to great effect.

Finalisers are especially relevant when using Operators to improve your DevSecOps capabilities, and if that’s the space you are looking at and would like some conversations about, then you should reach out to us to say “Hi! 😃”.